views

Web scraping is a useful tool, but it could also rise to ethical and legal ambiguity. To begin with, a website can receive thousands of requests each second. Browsing at such amazing speeds is bound to attract attention. Such large amounts of requests are likely to clog up a website's servers and, in extreme situations, might be deemed a denial-of-service attack. Similarly, any website that requires a login may contain information that is not regarded as public as a result, and scraping such websites could put you in legal trouble.

A programmer may be required to work with Google (Map) Reviews in a variety of situations. Anyone experienced with the Google My Business API knows that getting reviews using the API requires an account ID for each location (business). Scraping reviews can be quite useful in situations when a developer wants to work with evaluations from several sites (or does not have access to a Google business account).

What is Web Scraping?

The technique of obtaining data from web pages is known as web scraping. Various libraries that can assist you with this, including:

Beautiful Soup is a great tool for parsing the DOM, as it simply extracts data from HTML and XML files.

Scrapy is an open-source program that allows you to scrape huge databases at scale.

Selenium is a browser automation tool that aids in the interaction of JavaScript in scraping tasks (clicks, scrolls, filling in forms, drag, and drop, moving between windows and frames, etc.)

You will navigate the page with Selenium, add more content, and then parse the HTML file with Beautiful Soup.

Selenium

You can install Selenium using pip or conda (package management system)

#Installing with pip pip install selenium#Installing with conda install -c conda-forge selenium

To interact with the chosen browser, Selenium requires a driver. As a personal choice, you will be utilizing Chrome Driver. Here are some of the most often used browser drivers:

BeautifulSoup

BeautifulSoup will be used to interpret the HTML page and retrieve the data you need. (in our case, review text, reviewer, date, etc.) BeautifulSoup must be installed first.

#Installing with pip pip install beautifulsoup4#Installing with conda conda install -c anaconda beautifulsoup4

Web Driver Installation and URL Input

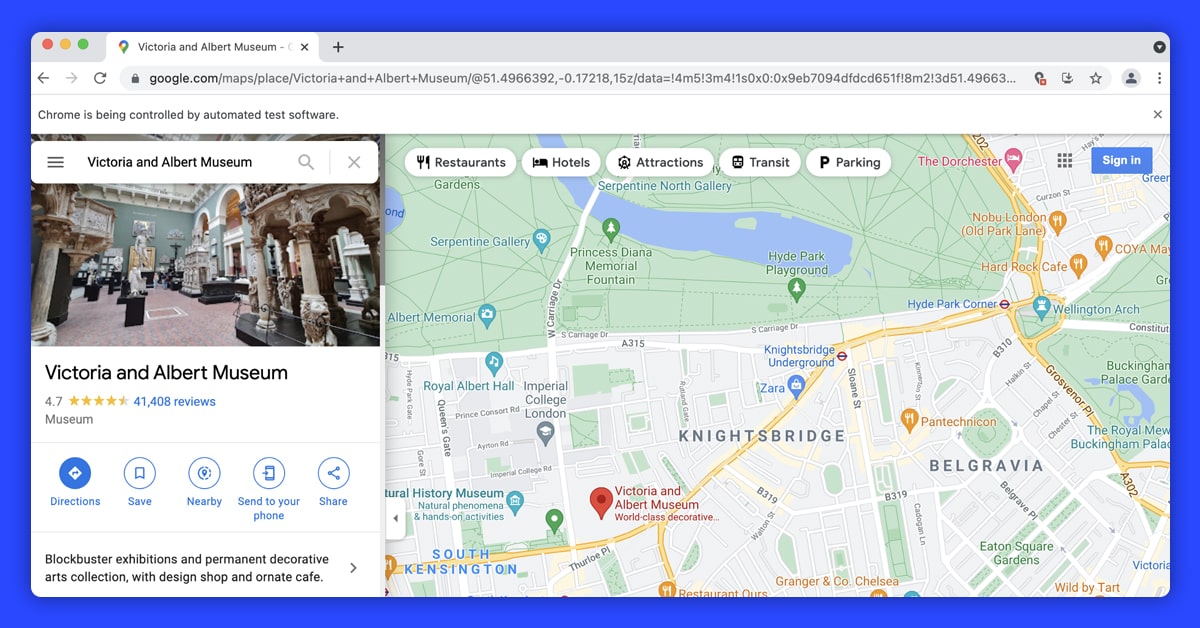

Import and initialize the web driver, that's all there is to it. Then you will need to send the Google Maps URL of the location we wish to receive reviews for to the get method:

from selenium import webdriver driver = webdriver.Chrome()#London Victoria & Albert Museum URL url = 'https://www.google.com/maps/place/Victoria+and+Albert+Museum/@51.4966392,-0.17218,15z/data=!4m5!3m4!1s0x0:0x9eb7094dfdcd651f!8m2!3d51.4966392!4d-0.17218' driver.get(url)

Navigating

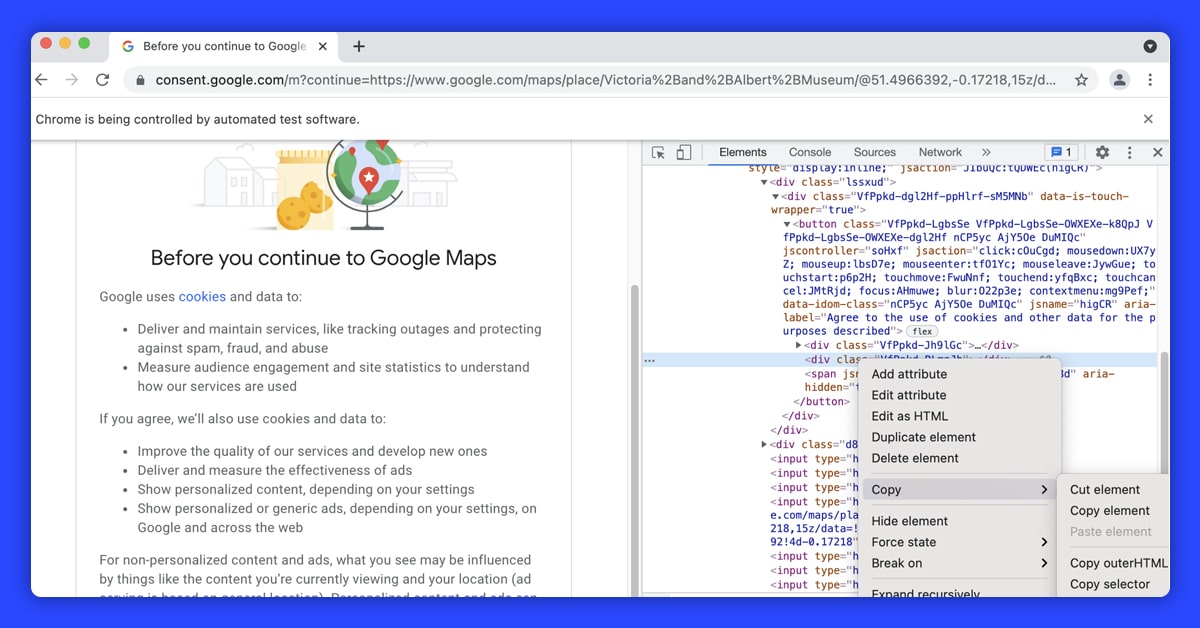

Before going to the actual web address specified via URL variable, the web driver is very likely to come across the consent Google page to agree to cookies. If that's the case, you can proceed by clicking the "I agree" option.

To explore the website, Selenium provides numerous methods; in this case, find element by xpath(): is used.

driver.find_element_by_xpath('//*[@id="yDmH0d"]/c-wiz/div/div/div/div[2]/div[1]/div[4]/form/div[1]/div/button').click()#to make sure content is fully loaded we can use time.sleep() after navigating to each page

import time

time.sleep(3)

This is when things get a little complicated. The URL in our example took us to the right site we needed to display, and we tapped on the 41,408 comments button to load comments.

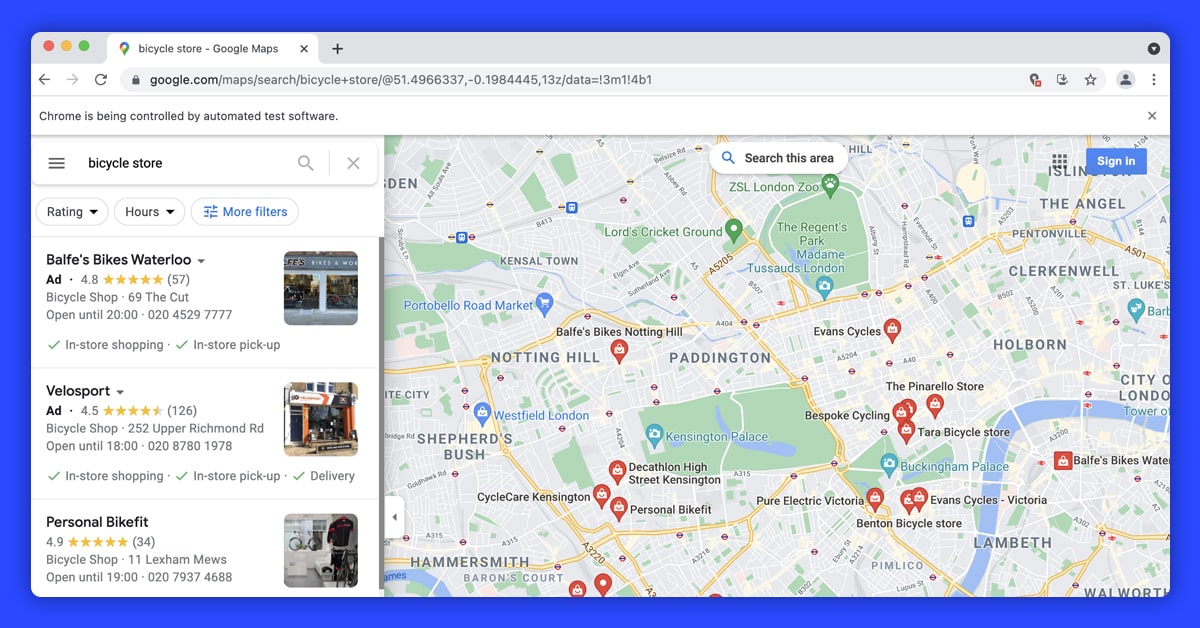

On Google Maps, however, there are a few various sorts of profile pages, and in many cases, the location will be presented with a bunch of other places on the left side, with adverts likely at the top of the list. The following URL, for example, will take us to the following page:

url = 'https://www.google.com/maps/search/bicycle+store/@51.5026862,-0.1430242,13z/data=!3m1!4b1'

You will develop an exception handler code and go to load reviews so you don't get caught on these different types of layouts or load the wrong page.

try:

driver.find_element(By.CLASS_NAME, "widget-pane-link").click()

except Exception:

response = BeautifulSoup(driver.page_source, 'html.parser')

# Check if there are any paid ads and avoid them

if response.find_all('span', {'class': 'ARktye-badge'}):

ad_count = len(response.find_all('span', {'class': 'ARktye-badge'}))

li = driver.find_elements(By.CLASS_NAME, "a4gq8e-aVTXAb-haAclf-jRmmHf-hSRGPd")

li[ad_count].click()

else:

driver.find_element(By.CLASS_NAME, "a4gq8e-aVTXAb-haAclf-jRmmHf-hSRGPd").click()

time.sleep(5)

driver.find_element(By.CLASS_NAME, "widget-pane-link").click()

The steps in the code above are as follows:

- Look for the reviews option by class name (in the first scenario, the driver landed on a page) and click it.

- If the HTML isn't processed, examine the page's contents with BeautifulSoup.

- Count the advertising if the answer (or the page) includes paid ads, then go to the Google map profile page for the target location. Otherwise, go to the Google map profile page for the target location.

- Click the reviews button (class name=widget-pane-link) to open the reviews.

Scrolling and Loading More Reviews

This should lead us to the webpage with the reviews. Although the first download would only provide ten reviews, a subsequent scroll would bring another ten. To have all of the reviews for the area, we'll calculate how many cycles we need to browse and then apply the chrome driver's execute script() method.

#Find the total number of reviews

total_number_of_reviews = driver.find_element_by_xpath('//*[@id="pane"]/div/div[1]/div/div/div[2]/div[2]/div/div[2]/div[2]').text.split(" ")[0]

total_number_of_reviews = int(total_number_of_reviews.replace(',','')) if ',' in total_number_of_reviews else int(total_number_of_reviews)#Find scroll layout

scrollable_div = driver.find_element_by_xpath('//*[@id="pane"]/div/div[1]/div/div/div[2]')#Scroll as many times as necessary to load all reviews

for i in range(0,(round(total_number_of_reviews/10 - 1))):

driver.execute_script('arguments[0].scrollTop = arguments[0].scrollHeight',

scrollable_div)

time.sleep(1)

The scrollable component is initially found inside the while loop above. The scroll bar is initially located at the top, which means the vertical placement is 0. We set the vertical position(.scrollTop) of the scroll element(scrollable div) to its height by giving a simple JavaScript snippet to the chrome driver(driver.execute script) (.scrollHeight). The scroll bar is being dragged vertically from position 0 to position Y. (Y = whatever height you choose)

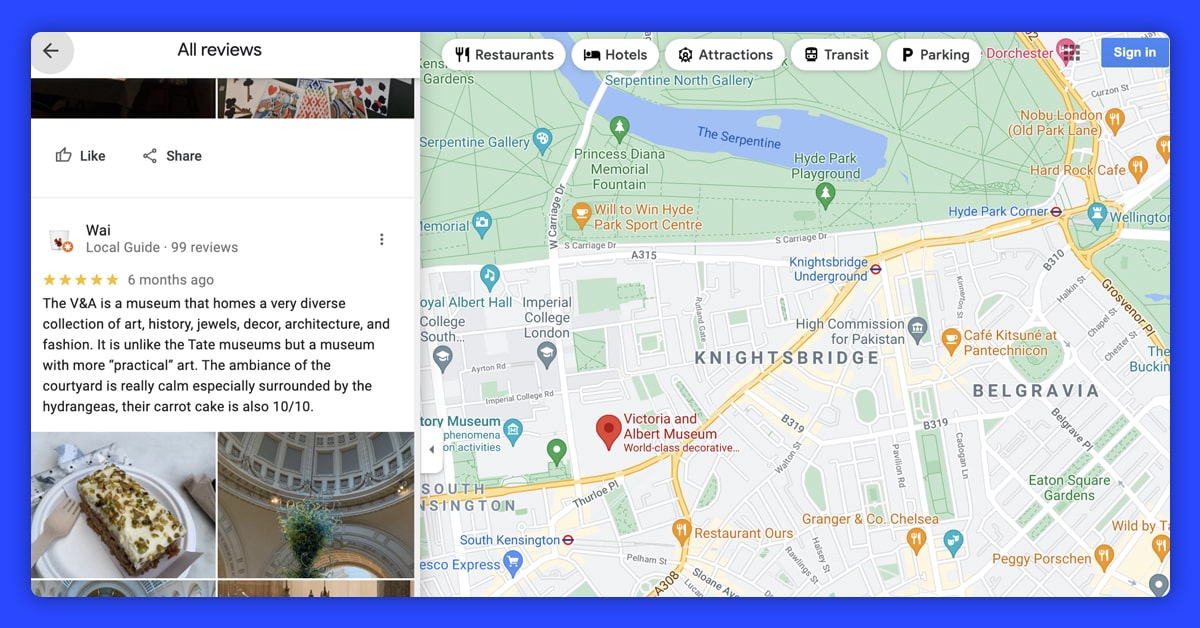

Parsing HTML and Data Extraction

response = BeautifulSoup(driver.page_source, 'html.parser')

reviews = response.find_all('div', class_='ODSEW-ShBeI NIyLF-haAclf gm2-body-2')

We can now construct a function to get important data from the HTML-parsed reviews result set. The code below would accept the answer set and provide a Pandas DataFrame with pertinent extracted data in the Review Rate, Review Time, and Review Text columns.

def get_review_summary(result_set):

rev_dict = {'Review Rate': [],

'Review Time': [],

'Review Text' : []}

for result in result_set:

review_rate = result.find('span', class_='ODSEW-ShBeI-H1e3jb')["aria-label"]

review_time = result.find('span',class_='ODSEW-ShBeI-RgZmSc-date').text

review_text = result.find('span',class_='ODSEW-ShBeI-text').text

rev_dict['Review Rate'].append(review_rate)

rev_dict['Review Time'].append(review_time)

rev_dict['Review Text'].append(review_text)

import pandas as pd

return(pd.DataFrame(rev_dict))

Feel free to contact ReviewGators today if you are looking to scrape Google reviews using Selenium Python.

Request for a quote!!